What Was Work For? May Day, AI, and the Future Nobody Promised Us

- Staunton Books & Tea

- 15 hours ago

- 12 min read

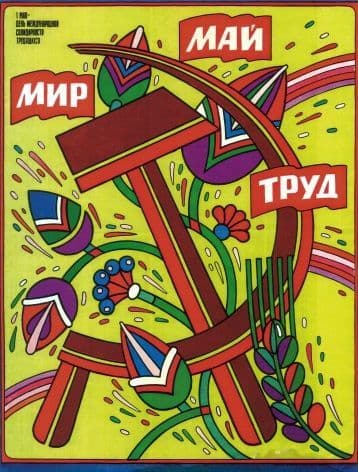

It starts with a parade

Julia Sabin, the proprietor of Staunton Books & Tea, grew up in the Soviet Union, where May

Day, International Workers' Day, was one of the year's great collective performances. Factories, schools, state organizations of every kind: everyone marched. There were banners and flowers, brass bands and slogans. The worker was the hero of the state. Labor was not something you endured — it was something you celebrated, publicly, with your neighbors, in the street.

The first image is from Kyiv, May 1, 1986. Five days after Chernobyl. The parade was not cancelled. No one in the parades knew anything about the disaster. Julia was marching with her parents in Kharkiv the same day. Read more

Only much later comes the understanding of the contradiction at the heart of that spectacle. The parade was organized by the state. The workers marched, but they didn't choose the route. The celebration of labor was real and felt - and it was also controlled, curated, performed on behalf of a system that needed the workers more than it honored them. The hero of the banner and the person standing on aching feet at six in the morning are not always the same person.

That contradiction, between the celebration of labor and the reality of who controls it, has not gone away. This May, it feels more urgent than ever. Not because technology is new — every generation has faced a version of this reckoning — but because the pace, scale, and deliberateness of what is happening right now is unlike anything we have navigated before. AI is not arriving as a neutral tool. It is arriving with manifestos.

This post is not an argument against AI, and it is not a case against technological progress. We use these tools, benefit from them, and find value in them. The point is not that these technologies should not exist. The point is to ask who is organizing the world around them and whether people were part of that calculation at all.

What Do We Even Mean When We Say "Work"?

Before talking about what AI is doing to work, it is worth pausing on what work actually is. The word gets used constantly, collapsing together several very different things.

A job is a contractual arrangement - an exchange of time and skill for compensation. Labor is the physical or cognitive exertion itself. Work, in the deepest sense, is something more: the human activity of shaping the world, of making things that last, of contributing something that carries your mark. These distinctions draw on a long tradition of philosophical thinking about work, from Hegel through Hannah Arendt, and they matter because AI does not threaten all three equally.

The philosopher Hannah Arendt wrote The Human Condition (1958) at the same time when the AI academic field was born - John McCarthy coined the term "artificial intelligence" at Dartmouth in 1956. Arendt could not have imagined where that field would lead, but she distinguished three forms of human activity that could be very instrumental in the current discussion around AI impact. Arendt's concern was that modernity was already collapsing these distinctions. Work was being reduced to labor: repetitive, consumable, interchangeable. And action, the distinctly human capacity to begin, was disappearing from view.

AI accelerates exactly this dynamic. It can automate labor with remarkable efficiency. It is beginning to automate work — writing, designing, composing, analyzing. What it cannot do, at least not yet, is act in Arendt's sense: to be genuinely responsible, embedded in relationships, accountable to a community, capable of beginning som ething that is truly one's own. But if we are not careful about what we preserve, we may arrive at a world that has optimized away the very activities that gave life texture and meaning.

David Graeber took a more irreverent route to a similar destination in Bullshit Jobs (2018). His argument: a significant proportion of modern jobs are, by the private assessment of the people doing them, socially pointless. They exist not because they need to exist, but because the economy has organized itself around employment as a mechanism of social control and identity. If that is true, if many jobs are already hollow, then the question AI forces on us is not only "what will replace these jobs?" but "why were we doing them in the first place?" Which brings us to an economist who asked that question almost a century ago.

Keynes's Prediction, and Why It Didn't Come True

In 1930, at the depth of the Great Depression, John Maynard Keynes wrote a short essay called "Economic Possibilities for Our Grandchildren." His grandchildren, he noted, would be alive around 2030. He looked ahead with striking optimism: technology and compound growth would so dramatically increase the productive capacity of advanced economies that within a century, the basic economic problem — how to survive — would effectively be solved. People would work perhaps fifteen hours a week. The rest of their time would be given over to leisure, culture, and the arts of living well.

He was right about the wealth. The standard of living in wealthy countries has risen roughly six times since 1930. He was wrong about almost everything else.

The fifteen-hour workweek never came. Instead, inequality persisted and, in many places, deepened. The gains from productivity were captured by capital rather than distributed to workers. Economists who have revisited Keynes's essay offer several explanations for why his leisure prediction failed: people preferred more consumption over more leisure; hobbies became more expensive; social status, not subsistence, came to dominate people's desires; and for many at the bottom of the distribution, basic needs were never actually met, so the freedom to work less never materialized.

Keynes also did not anticipate how thoroughly work would come to define identity in modern societies. We do not just have jobs. We are our jobs. To ask someone "what do you do?" is to ask who they are. The Protestant work ethic, as Max Weber traced it, made labor not merely economically necessary but morally virtuous. The idea that one should want to work less has always been faintly suspect in American culture, even as the rest of the world watches Americans work themselves to exhaustion.

Studs Terkel understood this. His landmark 1974 oral history, Working, gathered the voices

of hundreds of Americans, steelworkers, waitresses, gravediggers, executives, farmers, talking about what they did with their days. What emerged was not a story of economic exchange but of meaning, dignity, frustration, and the hunger to matter.

"I think most of us are looking for a calling, not a job. Most of us, like the assembly-line worker, have jobs that are too small for our spirit. Jobs are not big enough for people."

The question for 2026 is whether AI will finally deliver the liberation Keynes imagined — or whether, as before, the productivity gains will flow upward while the disruption flows down.

The Manifestos: Who Is Writing Our Future?

We are asking this question at an unusual moment, because the people building AI are not quiet about their intentions. In the past year, the most powerful actors in this space have all published their visions of what the world should look like. They are worth reading carefully — not as technical documents, but as political ones.

The US Government has made its position explicit. America's AI Action Plan, released in July 2025, frames the stakes as a competition: the official ai.gov website states that the United States is "in a race to achieve global AI dominance," and that "whoever has the largest AI ecosystem will set the global standards and reap broad economic and security benefits." The plan does acknowledge workers — it states that "AI can help America build an economy that delivers more pathways to economic opportunity for American workers" — but the mechanisms to protect those workers from the disruption remain vague and non-binding. This is a coherent strategic position. It is not a vision of human flourishing.

"AI can help America build an economy that delivers more pathways to economic opportunity for American workers."

Closer to home, Virginia has developed its own approach. Governor Youngkin's Executive Order 30, signed in January 2024 with a comprehensive task force report published in January 2026, focuses on responsible and transparent use of AI in state government. It is more cautious than the federal approach. But like the federal plan, it is primarily a governance and competitiveness document. The question of what happens to workers as AI reshapes the economy does not yet have a state-level answer in Virginia.

The gap between rhetoric and reality is already visible at the federal level. According to NBC News, responses from federal workers who were asked to justify their jobs in a brief email were fed into a large language model to determine whether those jobs were "mission-critical." The results, tracked by Government Executive, were stark: 348,219 individuals quit, retired, were laid off, or otherwise left federal employment, while new hiring fell by 55.6%. The announced plan to address the resulting service gaps? A projected 15% increase in federal spending on AI. Buy the technology; reduce the workforce; cite the savings. It is worth noting that the technology spending actually increased even as the workforce was cut — DOGE's claimed savings were largely illusory, while the human cost was very real.

OpenAI published an industrial policy paper framing its vision as "people-first." Anthropic published a more measured economic policy response — one that acknowledges genuine uncertainty about the scale of the disruption and maps out different scenarios depending on how fast the transition happens. It is, among these documents, the most honest about what it does not know. Neither company has offered binding commitments to the workers most directly affected.

Palantir, the defense and intelligence software company, published something in a different register entirely — less an economic argument than a geopolitical one, arguing that democratic societies must build autonomous weapons and AI-powered defense systems or cede power to authoritarian competitors. Workers, in this document, are not really part of the frame. The question it asks is about nations and power, not people and dignity.

Against all of this, a counter-current is gathering. The Resonant Computing Manifesto, published in late 2025 and signed by prominent technologists, argues that the people building these systems have a responsibility to reject the extractive, surveillance-oriented model that dominant platforms have followed. The European Trade Union Confederation has argued that workers must have the legal right to challenge AI decisions that affect their employment, and that employers must involve unions in AI-related workplace decisions. The Global Tech Justice Manifesto goes further, arguing that we have entered an era of digital oligarchy in which a handful of corporations set global rules while evading democratic accountability, with the burden falling hardest on communities in the Global South.

What is striking about this range of documents is not that they disagree — disagreement is healthy. It is that the most powerful ones were written without any meaningful public input. The manifestos that will shape the next decade of AI policy were drafted by corporate legal and policy teams, reviewed by lobbyists, and published as finished positions. The workers, the writers, the teachers, the artists, the communities — the people whose lives will be most directly reorganized — were not in the room.

Beyond Jobs: What Else We Stand to Lose

The conversation about AI and work tends to focus on employment — which jobs will survive, which will disappear, and what the unemployment numbers will look like. These questions matter. But they are not the only questions, and for many people, they are not even the most urgent ones.

For authors and creators, the issue is not only income but authorship itself. Every book on the shelves at Staunton Books & Tea represents years of a human being's attention — research, revision, the particular way one person sees the world worked into sentences that carry that vision to strangers. The large language models that generate fluent text were trained on those books, those articles, those essays, often without the consent or compensation of their creators. The legal questions are actively contested — over 70 copyright infringement lawsuits have been filed against AI companies, and courts have reached conflicting conclusions about whether training on copyrighted works constitutes fair use. The US Copyright Office concluded in May 2025 that some uses will qualify as fair use and some will not — which is to say, the law has not caught up with the technology. The ethical questions are clearer: there is something worth naming in the act of taking the labor of writers and using it to build a system that competes with writers, without asking.

On safety, a word that means very different things depending on who is speaking. When AI companies talk about safety, they mean technical alignment — ensuring that models do not behave in catastrophically unintended ways, and preventing misuse and cybersecurity threats. When a parent in Staunton thinks about safety, they mean something more immediate: will my child's school use this in ways I understand? Will my health data be protected? Will the information my teenager encounters be accurate? These are not the same conversation, and the gap between them is not accidental.

Truth and trust are harder to quantify but may be the most consequential losses. We live in an era when convincing text, images, and voices can be generated at scale, without any grounding in reality. For a small city — where trust is built slowly, face to face, across years of shared life — the erosion of a common epistemic ground is not an abstract concern. It affects whether neighbors can agree on what happened, whether institutions retain credibility, whether civic life is possible at all.

Children and human development present perhaps the most intimate version of these questions. Keynes imagined leisure as freedom: the freedom to cultivate oneself, to pursue art and conversation and understanding. But that kind of freedom requires something to push against. Research on learning and cognitive development consistently shows that productive struggle — the experience of not knowing and then, slowly, working toward knowing — is not a bug in the learning process. It is the process. A generation raised with an always-available answer engine will know more facts and develop fewer capacities. What that does to human development over time is genuinely unknown, and almost no one with power over these systems is moving slowly enough to find out.

Open Questions for an Uncertain Moment

None of what we have described here is offered as a conclusion. We are not in a moment that supports confident prescriptions.

What we do believe is that these questions deserve more than the conversations currently happening among the people building these systems. They deserve the kind of slow, honest, face-to-face conversation that has always been how communities actually work through hard things — not through policy papers, but through the accumulated friction of people who disagree with each other and have to keep living together.

A few questions that seem worth sitting with:

If AI does replace large categories of labor, who decides what we do with the freed time — and who benefits? History suggests the answer is not automatic or obvious.

If the companies building AI are also writing the policy frameworks governing it, what democratic check exists? And what would it take to create one?

If Keynes was right about wealth and wrong about leisure — if productivity gains have never, on their own, produced a more humane distribution of time and freedom — what makes this moment different?

What do we owe each other, as workers, as creators, as neighbors, in a transition that nobody voted for? And beneath all of it: is work a human condition, something essential to who we are and how we make meaning? Or is it a social arrangement that could, in principle, be reorganized around different values — not productivity and growth, but care, creation, community, and the kinds of action that Arendt thought made us most fully human?

"What I propose, therefore, is very simple: it is nothing more than to think what we are doing." — Hannah Arendt, The Human Condition

These are not questions we can answer from behind a counter. But they are questions we can ask together — honestly, without a predetermined destination.

These questions are the beginning of a conversation, not the end of one. We are planning a small salon-style gathering at Staunton Books & Tea to continue it in person — an informal discussion focused not on how to use AI, but on what we are worried about, what we value, and what kind of future we actually want. We hope you'll join us.

Reading

For the foundation:

Studs Terkel, Working (1974) — Oral histories of Americans and what they actually feel about their jobs. One of the most humane books ever written about labor. Start here if you want to remember what is at stake.

Hannah Arendt, The Human Condition (1958) — Dense in places, but the framework of labor / work / action is one of the most useful tools for thinking about what AI is actually threatening. Even a summary will serve you well.

John Maynard Keynes, "Economic Possibilities for Our Grandchildren" (1930) — A short essay, freely available online. Read it before you read anything else about AI and the future of work. The prescience and the blind spots are equally instructive.

For deeper reading:

David Graeber, Bullshit Jobs: A Theory (2018) — Provocative and funny and deeply serious. If you have ever wondered whether your job needed to exist, Graeber has done the research.

Erik Brynjolfsson & Andrew McAfee, The Second Machine Age (2014) — The optimistic case for digital technology's economic potential, balanced with clear-eyed attention to inequality and disruption.

Paul Lafargue, The Right to Be Lazy (1883) — Written in a French prison by Karl Marx's son-in-law, this short, furious pamphlet argues that the working class has been poisoned by its own love of labor, and that the right to leisure is more sacred than the right to work. Lafargue imagined automation freeing people to work three hours a day. It is freely available online and reads in an afternoon. Place it next to Keynes and the conversation becomes considerably more interesting.